Before we proceed with bilinear interpolation and its applications in image processing, let’s review some high school algebra.

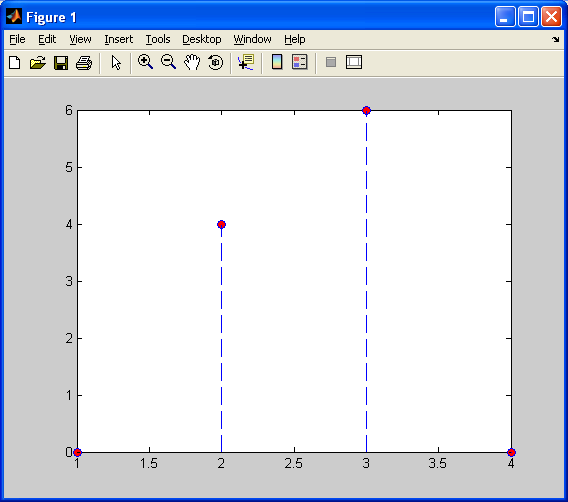

If we have a set of two data points, and

, as shown below (

), the function value

at these points can be defined as:

Seems simple enough, no?

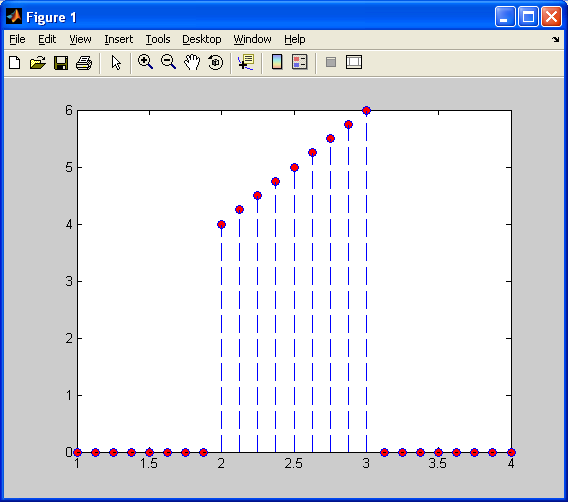

Now, assume that we want more data points in between these values. We’ve already seen what happens with nearest neighbour interpolation – we get a jagged discountinuous approximation. We want something better! We will make the values, , for each datapoint,

, where

, between

and

, fit on a line, as shown below:

This technique is known as linear interpolation, and each data point can be calculated using the simple slope-intercept formula , as we all learned in elementary school. If we separate the parameters, we can solve for each y-value according to:

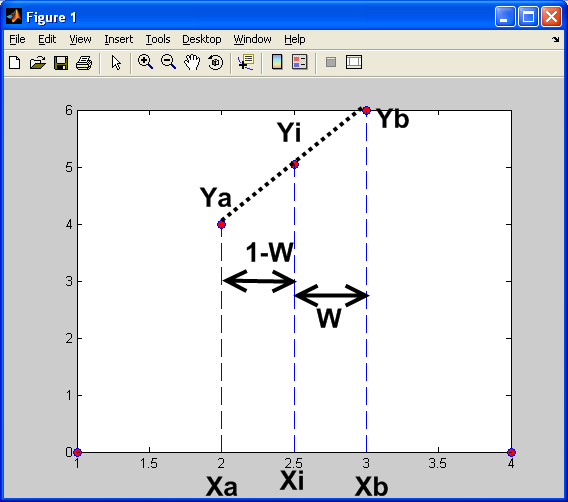

As the for the weight, W, we can solve this by assuming that we want to insert equally-spaced data points,

between

and

. So, for each data point,

and

. Using the formula above, we can create a simple loop that calculates W, (1-W), and

for each

. Please refer to the diagram shown below for a visual explanation.

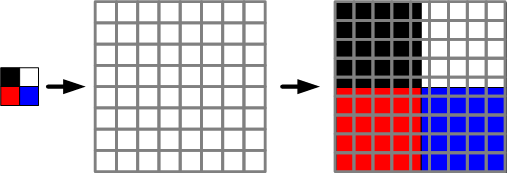

Now, let’s extend this to image processing and step it up a notch or two. So, we want to upsample an image, as shown below:

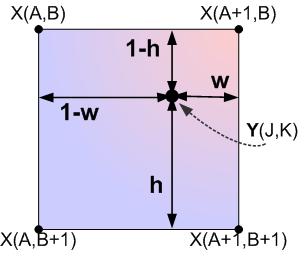

In order to do this, as we did with nearest neighbour interpolation, we need to define a transformation between the coordinates of the source image and our final (upsampled) output image. As shown below…

From the image above, we can see now that when upsampling using bilinear interpolation, we are simply creating the new pixel in the target image from a weighted average of its 4 nearest neighbouring pixels in the source image. The weights: h,1-h,w,1-w,are determined by the relative position of the new pixel compared to its neighbours. Also note that these weights represents lines in two dimensions, similar to the 1D example at the top of this page. So, in general, we can interpolate any pixel value to place in the target image as:

The following code was used to generate the examples that follow:

%Bilinear Interpolation function %========================================================================== function output_image = bli(input_image,x_res,y_res) %========================================================================== % output_image : a grayscale image of dimensions x_res by y_res % input_image : an arbitray input image with dimensions less than % x_res by y_res % x_res : the horizontal resolution of the output image % y_res : the vertical resolution of the output image %========================================================================== %Import my original picture file I = input_image; %Convert image to grayscale (intensity) values for simplicity (for now) I = rgb2gray(I); %Determine the dimensions of the source image %Note that we will have three values - width, height, and the number %of color vectors, 3 [j k] = size(I); %Specify the new image dimensions we want for our larger output image x_new = x_res; y_new = y_res; %Determine the ratio of the old dimensions compared to the new dimensions %Referred to as S1 and S2 in my tutorial x_scale = x_new./(j-1); y_scale = y_new./(k-1); %Declare and initialize an output image buffer M = zeros(x_new,y_new); %Generate the output image for count1 = 0:x_new-1 for count2 = 0:y_new-1 W = -(((count1./x_scale)-floor(count1./x_scale))-1); H = -(((count2./y_scale)-floor(count2./y_scale))-1); I11 = I(1+floor(count1./x_scale),1+floor(count2./y_scale)); I12 = I(1+ceil(count1./x_scale),1+floor(count2./y_scale)); I21 = I(1+floor(count1./x_scale),1+ceil(count2./y_scale)); I22 = I(1+ceil(count1./x_scale),1+ceil(count2./y_scale)); M(count1+1,count2+1) = (1-W).*(1-H).*I22 + (W).*(1-H).*I21 + (1-W).*(H).*I12 + (W).*(H).*I11; end end output_image = M;

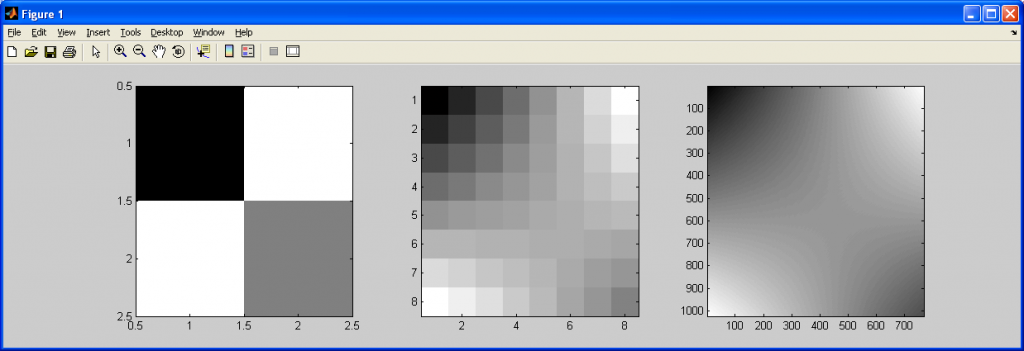

In the following image, the IMAGESC function in MATLAB was used to make the smooth transitions more noticeable. Please note that using IMAGESC instead of IMAGE will take a grayscale image, and dipslay it using a colormap rather than a set of gray levels between black and white. This is referred to as “false coloring”, since the original image does not contain any color data, only grayscale intensity values.

So, from the above images, we should be able to guess what differences this interpolation method has over nearest neighbour interpolation…

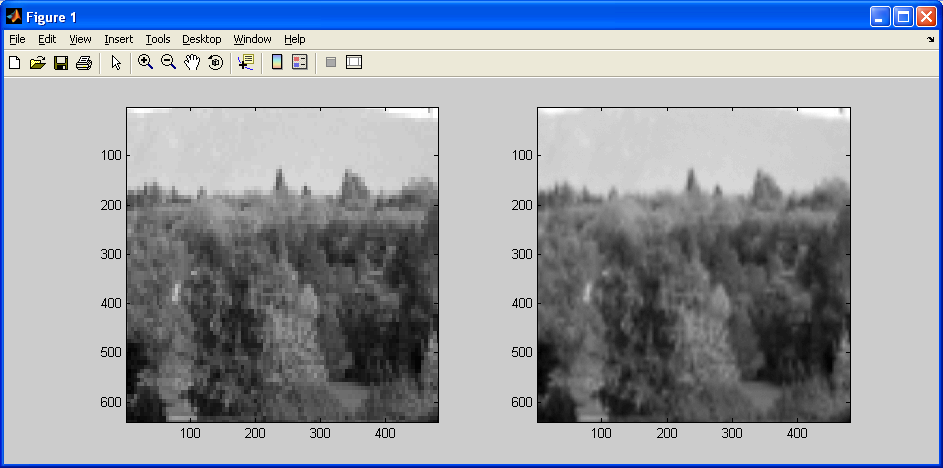

For starters, there is a smooth transition between distinct pixel values. When upsampling by a small factor, we can see a “grid” of pixels linearly changing colors. When upsampling by a very large factor, we get a very smooth gradient. This comes at the expense of a more complicated algorithm, and a slight loss of sharpness, as demonstrated below.

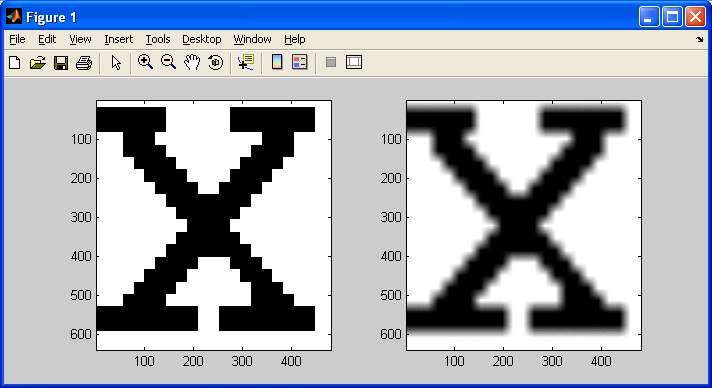

Notice in the above images (NN on left, BLI on right) two important observations: the image on the left has sharper edges, but the majority of the image is pixelated and “blocky”, while the image on the right has blurred edges, but the majority of the image is smoother and general looks better.

This is even more pronounced with a source image with only two intensity values (eg: black and white) which is being upsampled by a large factor. As noted in the previous subsection, this leads to aliasing, (jagged edges, an artifact introduced by nearest neighbour interpolation), but not when we use bilinear interpolation. In this case, we aren’t concerned with sharp edges in our output image – we would actually like to make them LESS sharp, taking advantage of the artifact which bilinear interpolation introduces – blurring.

There are advantages to each of these algorithms beyond the simple tradeoffs between code simplicity and speed, depending on the type of data you are working with (ie: photographs vs simple bitmap images). This should be kept in mind when learning any new techniques in image processing.